Rate of return

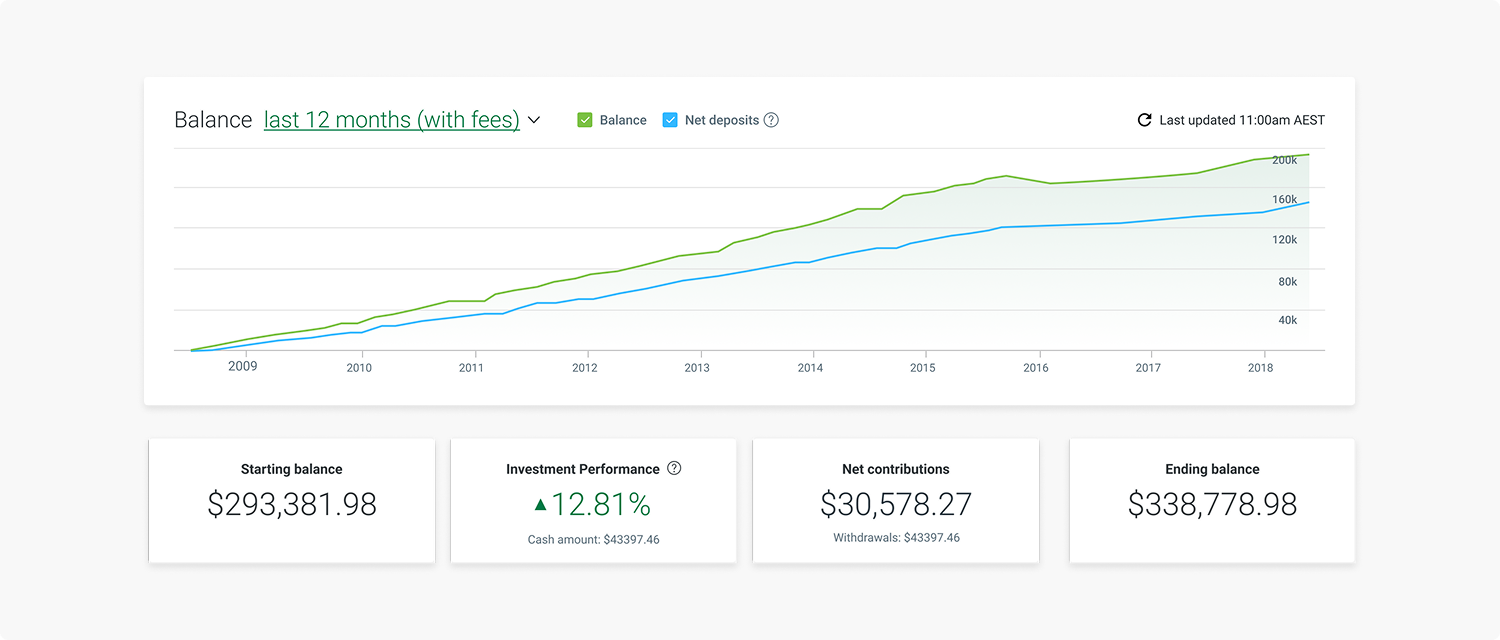

Led the experience design in building a foundation for IOOF’s portfolio performance graphs, reporting and statements.

Hypothesis

Uplifting the existing summary display and introducing a portfolio performance will allow for transparency and improve the self service and flexibility for the Advisers and Clients.

What were the existing problems?

Besides feature parity, we had a few clear issues that needed to be improved.

The existing on-page graphs were not flexible in displaying performance or fees, two important features that users are looking for in their superannuation and investments.

We had reports that could be generated with these details, but they were inconsistent between our two types of users in Advisers and Clients.

There were potential issues around declaring comparative performances.

And lastly, inconsistencies in terms used, naming and general language.

Initial ideas

Utilising our existing features, some initial ideas that were proposed were to uplift the existing graphs and reporting, to introduce detailed performance tabs and to provide further assistance to Advisers and Clients with their reporting and calculation methods.

Value and delight

Being able to provide flexibility in our graphs would enable our users to view their performance with detail and transparency.

With that flexibility, it would allow for users to self-serve which in turn, reduces their calls to our support centre. Introducing and delivering these features with consistency would provide us with a competitor advantage.

The risks and assumptions

We had assumptions about the user perspective and behaviour which would allow us to further research and user test. We thought that the...

User perspective would be to have all fees excluded from their performance.

Majority of users wouldn’t care about the calculation method.

Investor personas would already know the benchmarks, and wouldn’t require comparative performance.

We could be displaying different numbers in our calculation, this could be a legal issue

Knowledge gaps

Further to the risks and assumptions, there were many questions about what the users really needed.

What fees are we needing to include or exclude?

Who wants to know about these fees and why?

Are there differences between a super and non-super account?

Is the focus on balance or performance?

How are we calculating this performance? Do people care?

Unpacking workshop

I ran and facilitated a UX unpacking workshop to get more of the team involved and bridge the gap between all stakeholders. The purpose of the session was to answer any questions, hear more ideas and iterate and improve the concepts together. I prepared a few activities with the most successful being the dot voting session. We were able to review and set out clear steps before proceeding to set up our adviser survey.

User testing

We tested 24 adviser participants using Survey Monkey with 45 questions on 17 pages.

We presented each adviser with questions and screenshots from our concepts. It had an approximate 26 minute completion time.

We tested in 6 categories:

Adviser workflow

The client’s view

Charting and display

Fees inclusion

Benchmarking

Reporting

Research insights

Uplifting the existing summary display and introducing a portfolio performance will allow for transparency and improve the self service and flexibility for the Advisers and Clients.

A detailed, transparent performance tool

We were able to build an experience that allowed Advisers to have an efficient, relevant overview of their Client’s account, improving their workflow and gained a competitive edge in further use of the platform.

Clients are able to gain an understanding and transparency of what is really happening with their money and performance.

Improved the existing balance over time graph by displaying a detailed summary, built a tab for account performance and periodic performance and further options within reporting.

The interface was also uplifted to be consistent across Advisers (when viewing the clients account) and the Client’s own view.